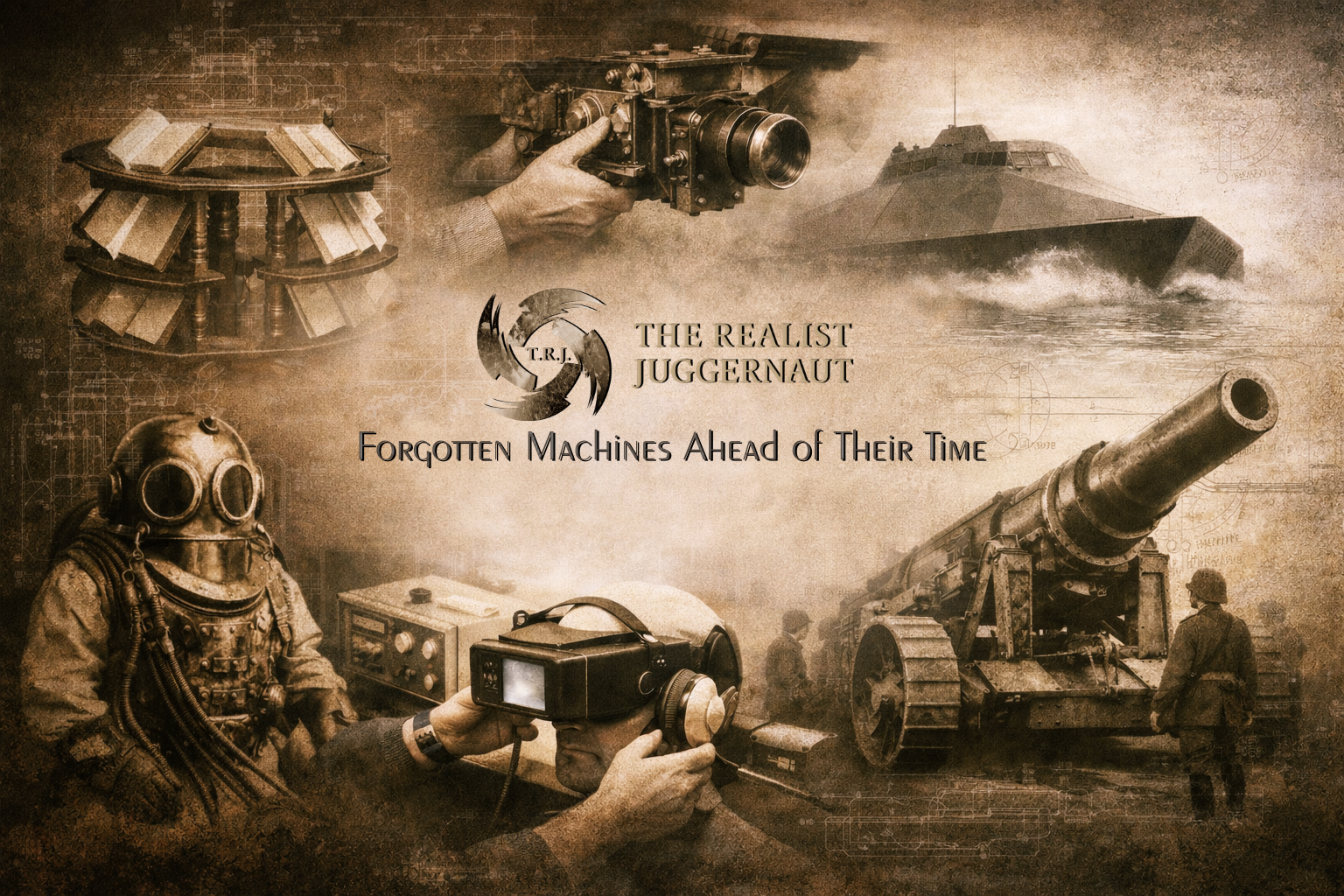

Forgotten Machines, Early Prototypes, and the Unusual Experiments That Quietly Built the Modern World

When people imagine the origins of modern technology, they often picture a clean, orderly progression. In that version of history, innovation unfolds step by step: crude machines gradually improve, inventions build neatly on one another, and over time the world arrives at the computers, smartphones, and global communication networks that define the modern age. It is a comfortable story because it feels logical, predictable, and controlled.

The real history of technology is nothing like that.

Instead of a smooth line of progress, innovation more closely resembles a field of scattered sparks. Ideas appear suddenly in unexpected places. Engineers experiment with designs that seem impossibly advanced for their time. Some inventions succeed immediately, while others vanish for decades before their importance is recognized. Many are created to solve very specific problems, yet the principles behind them later shape entirely different industries.

In other words, the future rarely arrives in a straight line.

Throughout history, inventors, scientists, and engineers have built machines that looked strange, impractical, or even absurd to the people who first encountered them. Early prototypes often seemed disconnected from everyday life. They were expensive, fragile, or difficult to operate. Some required entirely new scientific understanding before their potential could even be recognized.

Yet hidden inside those experimental designs were the seeds of the world that would eventually emerge.

Some of these machines were born in laboratories where researchers were attempting to solve problems no one else had even noticed yet. Others were built in factories, universities, or military workshops where urgent demands forced engineers to attempt solutions that had never been tried before. Wars accelerated many of these developments, pushing technology forward at a pace that peaceful industries rarely matched. Exploration did the same, forcing humanity to invent new tools for environments that had never been navigated before — the depths of the ocean, the upper atmosphere, and eventually space itself.

Many of the inventions that came out of those moments were not meant to last forever. They were prototypes, experiments, or transitional machines. Some were replaced almost immediately by better designs. Others were simply forgotten as the world moved on to new challenges.

But the ideas embedded inside those machines did not disappear.

A mechanical research device designed to help scholars consult multiple books at once quietly anticipated modern information management. A heavy diving suit built to allow humans to survive underwater helped open the path toward deep-sea exploration. A portable telephone demonstrated on a busy city street proved that communication no longer needed to be tied to wires or fixed locations. A small pointing device introduced during a computer demonstration in 1968 would eventually become one of the most familiar tools in modern computing.

In each case, the invention itself was only part of the story.

What truly mattered was the concept it introduced — the realization that a new capability was possible. Once that realization existed, the idea could evolve, improve, and eventually spread across industries and societies.

Seen this way, the history of technology becomes less about finished products and more about moments of discovery. It is a record of people pushing beyond the assumptions of their own time, building machines that allowed the next generation to imagine something larger.

The devices and experiments explored in this timeline represent those moments. Some appear crude by modern standards. Others look surprisingly familiar, as though they belong in the present rather than the past. All of them, however, share a common trait: each one captured a glimpse of the future long before the world was ready to fully understand it.

Taken together, these inventions form a hidden map of innovation — a record of how human curiosity repeatedly forced open new possibilities. They remind us that progress rarely moves in neat steps. It advances through risk, experimentation, and the willingness to build something that may not make sense yet.

And more often than not, the machines that look the strangest at first are the ones that quietly reshape the world.

The Library Wheel: Managing Knowledge Before Computers

Centuries before computers transformed the way information is stored, organized, and retrieved, scholars faced a problem that may sound surprisingly familiar today: knowledge was expanding faster than the systems designed to manage it.

During the Renaissance and early modern period, Europe experienced an explosion in written material. The invention of the printing press in the mid-15th century had dramatically accelerated the production of books, pamphlets, scientific treatises, and philosophical works. Libraries that once held only a limited number of manuscripts began to grow rapidly, and with that growth came an entirely new challenge. Scholars studying complex subjects were no longer consulting a single text; they were comparing ideas across many different volumes at once.

A philosopher examining classical works might need to reference Aristotle, Plato, and later commentaries simultaneously. A scientist studying astronomy could be comparing multiple observational records, charts, and theoretical writings at the same time. Legal scholars, theologians, and historians all faced the same obstacle: the information they needed existed across many separate books, each heavy, fragile, and difficult to manage within a crowded reading space.

Moving stacks of large volumes around a reading desk was inefficient and physically demanding. Many scholarly books of the period were enormous, sometimes weighing several kilograms, and handling multiple open texts quickly became impractical. Scholars needed a way to keep numerous references available at once without constantly rearranging piles of paper and parchment.

To address this problem, craftsmen and engineers created an ingenious mechanical device known as the book wheel, often referred to today as a library wheel.

The structure looked almost like a giant wooden wheel mounted vertically on a frame. Around its outer rim were multiple angled platforms designed to hold open books. Each platform supported a volume at a readable angle, allowing a scholar to keep several works open simultaneously. The wheel itself could rotate smoothly, bringing different books into view as needed.

What made the design particularly clever was the internal gearing system that kept each book upright as the wheel turned. As the structure rotated, the shelves remained level, preventing the books from sliding or closing. This meant a reader could spin the wheel to access another text while the previously opened pages remained exactly where they had been left.

In effect, the library wheel allowed scholars to move through multiple sources of information without ever leaving their seat.

The machine served as a mechanical solution to what modern technologists would recognize as parallel information access. Instead of sequentially opening one book after another, researchers could cross-reference ideas, quotations, and arguments across several texts at once. For historians, theologians, and scientists attempting to synthesize large bodies of knowledge, the ability to quickly switch between sources represented a significant improvement in efficiency.

Although the library wheel may appear quaint by modern standards, its underlying purpose reveals something fundamental about the long arc of technological development. The challenge it addressed—how to manage expanding amounts of information—remains one of the defining problems of every technological age.

Centuries later, librarians would develop indexing systems and classification methods to help organize growing collections. Card catalogs would allow readers to locate specific texts more quickly. Eventually, digital databases would transform the way information is searched and retrieved entirely. Today, search engines and artificial intelligence systems can process millions of documents in seconds.

Yet the intellectual problem behind all of those innovations was already visible in the reading rooms of early modern Europe.

The library wheel represents one of the earliest mechanical attempts to solve that challenge. Long before algorithms and digital networks existed, scholars were already building tools designed to navigate vast landscapes of information. The device stands as a reminder that humanity’s struggle to manage knowledge did not begin with computers—it began the moment written ideas started accumulating faster than any single mind could keep track of them.

In that sense, the library wheel was more than a clever piece of furniture. It was an early step in a technological journey that would eventually lead to the data-driven world we now inhabit.

A rotating book wheel designed to allow scholars to consult multiple open volumes simultaneously, an early mechanical solution for managing large amounts of written information.

Archival photograph — original photographer unknown.

The Old Gentleman: Early Engineering for Deep-Sea Exploration

Human curiosity has always driven exploration into places that seem fundamentally hostile to life. Deserts, polar ice, mountain peaks, and eventually space have all demanded technologies capable of protecting the fragile human body from environments it was never meant to endure. Long before spacecraft and deep-sea submersibles existed, however, one of the most mysterious and unforgiving frontiers was already capturing the imagination of engineers and explorers: the depths of the ocean.

For most of human history, the underwater world remained largely inaccessible. Divers working in coastal waters relied on breath-holding techniques or simple equipment that allowed only brief descents. Early diving bells provided a limited solution by trapping air inside large chambers lowered beneath the surface, but these devices restricted mobility and were difficult to operate in deeper waters.

As maritime trade expanded during the 18th and 19th centuries, the need for better underwater equipment became increasingly urgent. Shipwrecks containing valuable cargo lay scattered across the seafloor. Ports and harbors required underwater maintenance. Bridges, docks, and underwater foundations demanded construction and repair beneath the surface. Engineers began searching for ways to keep divers safe while allowing them to work longer and at greater depths.

This need led to the development of increasingly sophisticated diving equipment, including one of the most unusual experimental suits associated with early diving technology: a massive leather-and-metal diving apparatus believed to date to the 18th century, later nicknamed “The Old Gentleman.”

Unlike modern diving suits made from flexible synthetic materials, this early design resembled something closer to a mechanical shell built around the human body. The suit was constructed from heavy leather reinforced with metal rings and structural joints intended to prevent collapse under water pressure. Its design attempted to address one of the greatest challenges faced by early divers: the crushing force exerted by deep water.

At even modest depths, water pressure increases dramatically. Without protection, the human body cannot withstand these forces for long. Early engineers understood this and sought ways to build protective structures that could shield divers while still allowing them to move and perform tasks.

The helmet of the suit was perhaps its most striking feature. Made of metal and fitted with thick glass viewing ports, it provided the diver with visibility while maintaining a sealed barrier between the wearer and the surrounding water. Air was supplied through hoses connected to the surface, allowing the diver to breathe while remaining underwater for extended periods.

Despite its ingenuity, movement within the suit was limited. The rigid construction meant that divers could not move as freely as they can in modern equipment. Walking, bending, and manipulating tools underwater required strength and careful coordination. The suit itself was extremely heavy when out of the water, and it often required assistance from multiple people just to help a diver enter or exit the apparatus.

Even with these limitations, designs like “The Old Gentleman” represented a significant leap forward in underwater engineering.

Such suits allowed divers to descend deeper than earlier equipment permitted, opening the door to new types of work beneath the sea. Salvage teams used diving suits to recover cargo and materials from shipwrecks. Engineers employed divers during the construction of underwater infrastructure, including harbor walls, bridge foundations, and marine pipelines. Explorers also began using diving equipment to study underwater environments that had previously remained beyond reach.

These early suits did more than solve immediate practical problems. They also laid the groundwork for an entirely new field of engineering: the development of technology capable of operating safely under extreme environmental pressure.

Over time, improvements in materials and design led to more flexible diving suits and better air supply systems. By the 20th century, advances in physics and engineering allowed the development of pressurized diving systems, underwater habitats, and eventually submersible vehicles capable of descending thousands of meters below the surface.

Today, remotely operated vehicles and advanced deep-sea submersibles explore parts of the ocean that human divers cannot reach. These machines can withstand immense pressures, capture high-resolution video, and collect scientific samples from the deepest parts of the ocean floor.

Yet the roots of that technology can be traced back to experimental devices like “The Old Gentleman.” These early designs represented the first serious attempts to build equipment that allowed humans to safely enter one of the most extreme environments on Earth.

What began as a heavy leather-and-metal suit designed for practical underwater work ultimately helped establish the engineering principles that still guide deep-sea exploration today. Long before robotic submarines and advanced pressure-resistant materials existed, pioneers were already building the tools that would allow humanity to push deeper into the unknown.

Early diving apparatus known as “The Old Gentleman,” a heavy leather-and-metal suit believed to date to the 18th century and later preserved in museum records.

Archival photograph — original photographer unknown.

The First Computer Mouse: A Small Device That Changed Human–Computer Interaction

In the early years of computing, interacting with a machine required patience, technical knowledge, and a willingness to work within rigid command systems. Most computers during the 1950s and early 1960s were controlled through punch cards, typed commands, or specialized input terminals operated by trained technicians. For many people outside scientific or engineering fields, the machines felt distant and inaccessible.

A group of researchers working in California began to question whether computers could be made far more intuitive.

At the Stanford Research Institute (SRI), engineer Douglas Engelbart was exploring ways to improve how humans interacted with digital information. His work focused on what he described as the goal of “augmenting human intellect”—the idea that computers could become tools that helped people organize ideas, analyze information, and collaborate more effectively rather than simply performing calculations.

Achieving that vision required a new kind of interface.

In 1964, Engelbart and his team built an experimental pointing device that allowed a user to control a cursor on a computer display by moving their hand across a surface. The prototype looked simple: a small rectangular wooden box with a single button mounted on top and a cable extending from the back. Beneath the device were two perpendicular metal wheels. As the box moved across a desk, the wheels translated that motion into horizontal and vertical movement on the screen.

The mechanism allowed a user to guide a pointer directly through physical motion.

At a time when most computers were controlled through typed instructions, the idea of manipulating objects visually on a display was radical. The device made it possible to select text, move elements on a screen, and interact with digital information in ways that felt far more natural than keyboard commands alone.

The small wooden device eventually acquired an informal nickname: the mouse.

The origin of the name is commonly attributed to the cable extending from the back of the device, which resembled the tail of a small animal as the unit moved across a desk. Although the name began as casual laboratory shorthand, it quickly became the term used to describe the device in demonstrations and documentation.

The device remained largely experimental until Engelbart publicly demonstrated it in 1968 during what would later become known as the “Mother of All Demos.” During that presentation at the Fall Joint Computer Conference in San Francisco, the mouse appeared alongside other groundbreaking technologies, including graphical computer displays, hypertext navigation, collaborative editing systems, and window-based interfaces.

Together, these ideas represented a new vision of computing centered on direct human interaction.

The wooden prototype shown in early photographs may appear primitive compared to modern devices, but the concept it introduced proved revolutionary. The mouse provided a practical method for navigating graphical interfaces, allowing users to control digital environments through simple hand movements.

Over the following decades, the idea would evolve as engineers refined the design. Mechanical wheels were eventually replaced by ball-based mechanisms, which were later superseded by optical sensors capable of detecting motion across a surface with high precision. The shape and ergonomics of the device improved as personal computers became widespread in homes and offices.

Yet the core principle remained unchanged.

Today, nearly every graphical computing system—from desktop computers to complex design software—relies on some form of pointer control derived from the same concept first demonstrated by Engelbart’s prototype.

The small wooden box created in a research laboratory during the 1960s introduced one of the most fundamental tools of modern computing. What began as an experimental interface device ultimately transformed how humans interact with digital information, making computers more accessible to millions of users around the world.

In the long history of technological innovation, few devices illustrate the power of a simple idea more clearly than the first computer mouse.

Early wooden prototype of the computer mouse developed by Douglas Engelbart and his team at the Stanford Research Institute. The device used two perpendicular wheels to translate hand movement into cursor motion on a computer display, introducing a new method of interacting with digital systems.

Archival photograph — original photographer unknown.

Fingerprints and the Birth of Biometric Databases

Long before digital identity systems, biometric scanners, and automated forensic software existed, governments faced a persistent and complicated challenge: how to identify individuals reliably within rapidly growing populations. As cities expanded and mobility increased during the late nineteenth and early twentieth centuries, traditional identification methods—names, photographs, and written descriptions—proved unreliable. People could change names, falsify documents, or simply move between jurisdictions without leaving clear records behind.

Law enforcement agencies around the world needed a method of identification that could not easily be altered or forged.

Fingerprint analysis emerged as one of the earliest scientific solutions to that problem. Researchers studying human skin patterns discovered that the ridges and loops found on fingertips form unique configurations that remain stable throughout a person’s life. Even identical twins share different fingerprint patterns. Once this principle became widely accepted, fingerprints quickly transformed from a curiosity studied by scientists into a powerful tool for law enforcement and identification.

By the early twentieth century, fingerprinting had begun to replace older identification methods used in policing, such as anthropometry—a system that relied on body measurements like arm length and head circumference. Fingerprints proved far more reliable and easier to collect. A small inked impression on paper could capture a pattern that uniquely identified an individual.

In the United States, the Federal Bureau of Investigation became one of the central institutions responsible for organizing and expanding fingerprint identification systems. As the FBI’s Identification Division grew during the early decades of the twentieth century, the agency began assembling what would become one of the largest fingerprint archives ever created.

By the 1940s, the scale of the operation was enormous.

Entire rooms inside federal buildings were filled with towering filing cabinets that contained millions of fingerprint cards. Each card represented a single individual whose prints had been recorded during an arrest, military enrollment, or official identification process. The sheer volume of records required a carefully structured system for organization and retrieval.

These collections were far more than simple archives.

They represented one of the earliest large-scale biometric databases—long before the term existed.

To manage this vast amount of information, technicians relied on classification systems designed to group fingerprints based on visible patterns and structural characteristics. Prints were categorized according to basic pattern types such as loops, whorls, and arches. Within those categories, additional features such as ridge endings, bifurcations, and other microscopic characteristics helped narrow potential matches.

When investigators needed to identify a suspect or match a set of prints recovered from a crime scene, trained examiners would begin the painstaking process of manual comparison. Technicians searched through classified records to locate potential matches, then visually examined the ridge patterns to confirm whether two prints belonged to the same person.

The work required extraordinary attention to detail. Analysts often spent hours or days comparing small variations in ridge patterns to determine whether a fingerprint truly matched a record in the archive. A single identification might require reviewing hundreds or even thousands of cards before a definitive match could be confirmed.

Despite the labor-intensive nature of the process, these early fingerprint systems dramatically improved the ability of law enforcement agencies to track criminal records and identify individuals across multiple jurisdictions. A person arrested in one state could be connected to crimes committed elsewhere if their fingerprints were already stored in the system. Investigators could link suspects to crime scenes using physical evidence left behind.

For the first time in modern policing, identity could be established using biological characteristics rather than names or documents.

The success of fingerprint identification laid the foundation for an entire field of forensic science. As technology advanced during the twentieth century, computers began to automate many of the tasks that once required manual comparison. Digital scanners replaced ink and paper cards. Sophisticated algorithms were developed to analyze ridge patterns and locate potential matches within enormous digital databases.

Today, systems such as the Automated Fingerprint Identification System (AFIS) and other biometric platforms can search millions of records within seconds. The concept of biometric identification has expanded beyond fingerprints to include facial recognition, iris scans, voice recognition, and DNA analysis.

Yet the roots of all these technologies can be traced back to the rooms filled with paper fingerprint cards and the technicians who patiently analyzed them by hand.

Those early archives represented the beginning of a new approach to identity—one that recognized the human body itself as a source of unique, verifiable information. Long before modern computers transformed forensic science, the foundation for biometric databases had already been built through careful observation, classification, and the systematic study of fingerprints.

Rows of fingerprint identification records maintained by the Federal Bureau of Investigation during the 1940s, representing one of the earliest large-scale biometric record systems.

Archival photograph — original photographer unknown.

The 1968 Demonstration That Changed Computing

Few moments in the history of technology have reshaped an entire industry as dramatically as the public computer demonstration that took place on December 9, 1968. What appeared at the time to be an unusual research presentation would later be recognized as one of the most important events in the development of modern computing.

The presentation was delivered by computer scientist Douglas Engelbart at the Fall Joint Computer Conference in San Francisco. Engelbart and his team from the Stanford Research Institute had spent years developing experimental technologies designed to make computers more interactive and accessible to human users. Their work culminated in a ninety-minute live demonstration that would later earn the nickname “The Mother of All Demos.”

To understand why the event was so significant, it is important to remember what computers looked like in the late 1960s.

At that time, computers were enormous machines typically housed in universities, government laboratories, and large corporations. They filled entire rooms and required specialized operators to run them. Interaction with these machines was highly technical. Most users entered commands through punch cards or typed instructions into text-based terminals. The process was slow, rigid, and far removed from the intuitive interfaces that modern users take for granted.

Computers were powerful tools for calculation and data processing, but they were not yet designed for direct human interaction.

Engelbart believed that could change.

His research focused on what he called “augmenting human intellect.” Rather than viewing computers solely as calculating machines, he envisioned them as tools that could help people think, organize information, and collaborate more effectively. To achieve that vision, computers needed interfaces that humans could interact with naturally.

During the 1968 demonstration, Engelbart revealed several technologies that would eventually define the future of computing.

One of the most striking innovations was a small handheld pointing device made of wood with two perpendicular wheels on its underside. This device—later known simply as the computer mouse—allowed a user to move a cursor across a screen with hand movements rather than keyboard commands.

For audiences accustomed to text terminals and punch-card computing, the idea of physically guiding a pointer across a display was extraordinary.

But the mouse was only one part of the system.

Engelbart also demonstrated a graphical interface in which information appeared on the screen as visual elements rather than lines of typed text. The display included windows that could contain different documents or tasks simultaneously. Users could select and manipulate objects directly on the screen, switching between programs and editing documents in real time.

This concept of windowed computing would eventually become the foundation for modern operating systems.

Another revolutionary idea presented during the demonstration was hypertext—a method of linking pieces of information so users could navigate between related documents instantly. Instead of reading information in a strict linear order, users could jump between connected ideas, creating a web-like structure of knowledge.

The implications of hypertext would later become central to the development of the World Wide Web.

The demonstration also showcased early forms of collaborative computing, allowing multiple people to work on shared documents simultaneously. Participants could edit text together, exchange ideas through on-screen communication tools, and interact within the same digital workspace.

At a time when most computers were isolated machines used by a single operator, the idea of networked collaboration was far ahead of its era.

The system Engelbart demonstrated combined several technologies working together: a computer display capable of showing graphical elements, an input device that allowed precise control of a cursor, software capable of linking information through hypertext, and a communication system that connected multiple users.

Taken together, these ideas represented a complete reimagining of how humans could interact with computers.

Many of the technologies shown in 1968 would not reach widespread public use for another decade or more. The concepts demonstrated by Engelbart’s team later influenced research laboratories such as Xerox PARC, where engineers refined graphical user interfaces and developed new computer designs during the 1970s.

From there, the ideas spread into commercial products.

Apple’s Macintosh computers helped bring graphical interfaces and mouse-driven navigation to a broader audience in the 1980s. Microsoft’s Windows operating system later extended these concepts across millions of personal computers. Today, the same fundamental principles appear in smartphones, tablets, and virtually every modern digital device.

What makes the 1968 demonstration remarkable is how many of these now-familiar technologies appeared together for the first time.

Windowed interfaces, interactive graphics, collaborative editing, hypertext navigation, and the computer mouse were all presented in a single event decades before they became everyday tools. At the time, the audience witnessed something that looked futuristic and experimental.

Today, the ideas introduced during that presentation are so deeply embedded in daily life that most users rarely think about them at all.

The mouse pointer moving across a screen, the windows layered on a desktop, the links connecting information across the internet—all trace their origins back to a research demonstration in 1968 that quietly reshaped the future of computing.

Historic demonstration of early interactive computing technologies including the computer mouse, graphical user interface, hypertext, and word processing systems in 1968.

Archival photograph — original photographer unknown.

The First Mobile Phone Call

For most of the twentieth century, the concept of a telephone was inseparable from the place where it sat. Phones were mounted on walls, resting on office desks, or positioned in homes where wires connected them directly to the communication grid beneath the streets. Conversations could travel across continents, but the person making the call remained physically anchored to a specific location.

That limitation defined telecommunications for decades.

Even as long-distance networks expanded and switching technology improved, the basic structure of telephone communication remained unchanged: wires connected buildings to central exchanges, and every call traveled through those physical connections.

Engineers had long imagined a different possibility—one in which telephone communication could move freely with the person using it rather than remaining tied to a fixed point. Early attempts at mobile communication existed in the form of large radio-based car phones used by police departments, emergency services, and some wealthy business executives. These systems relied on radio transmitters installed in vehicles and were limited by the small number of available radio channels.

The idea of a portable, handheld phone seemed far more ambitious.

Achieving that goal required solving several major engineering problems. A truly mobile phone needed to be compact enough to carry, powerful enough to transmit signals to nearby cell towers, and capable of connecting to a network designed to handle thousands—or eventually millions—of simultaneous wireless users.

By the early 1970s, engineers at Motorola had been working intensely on this challenge.

On April 3, 1973, Motorola publicly demonstrated its handheld cellular telephone in New York City. Engineer Martin Cooper placed the historic call using a prototype device that would later become known as the Motorola DynaTAC, while Motorola executive John F. Mitchell became one of the most visible public figures associated with the device’s early demonstrations and promotion.

The call itself was symbolic as well as historic. Cooper reportedly dialed a rival engineer at Bell Labs, announcing that he was speaking from a real handheld cellular phone.

The device used for the demonstration looked enormous compared to modern smartphones. The prototype measured nearly ten inches tall, weighed over two pounds, and required a battery system that provided only a limited amount of talk time. It resembled a small brick more than the sleek devices carried today.

Yet despite its size and limitations, the significance of the demonstration was unmistakable.

For the first time in history, a telephone call had been placed using a device designed to move with the user rather than remain fixed to a wall or desk.

The demonstration proved that true mobile communication was possible.

Behind that moment was an emerging technological concept known as cellular networking. Instead of relying on a single powerful transmitter to cover a large geographic area, cellular systems divide regions into smaller “cells,” each served by its own radio tower. As a user moves through different areas, the call is automatically transferred between towers, allowing continuous communication while traveling.

This architecture allowed networks to support far more users than earlier radio-based phone systems.

Although the 1973 demonstration showed that handheld phones could work, widespread consumer adoption would take years. Engineers still needed to develop reliable infrastructure, improve battery technology, and reduce the size and cost of the devices themselves.

It was not until the 1980s that the first commercial cellular phones became available to the public, including production versions of the Motorola DynaTAC. Even then, early models were expensive and primarily used by business professionals.

But the direction of the technology was clear.

Over the following decades, mobile phones became smaller, more powerful, and more affordable. Digital cellular networks replaced earlier analog systems. Data transmission capabilities were added alongside voice communication. Eventually, mobile devices evolved into smartphones capable of performing tasks that once required entire desktop computers.

Today, billions of people carry powerful computing and communication devices in their pockets. Smartphones allow instant messaging, video calls, internet access, navigation, photography, and countless other functions—all built on the foundation of wireless communication.

The origin of that transformation can be traced back to a moment on a New York City sidewalk in 1973, when an engineer demonstrated that the telephone no longer needed to remain in one place.

The world had just taken its first step toward truly mobile communication.

Motorola Vice President John F. Mitchell demonstrating an early portable cellular telephone prototype during a public demonstration in New York City in 1973.

Archival photograph — original photographer unknown.

Autonomous Vacuum Concept Prototype: The Early Vision of an Autonomous Home

In the middle of the twentieth century, engineers and appliance manufacturers were not only building new machines—they were also trying to imagine what everyday life might look like decades into the future. The postwar period, particularly the 1950s, was filled with bold predictions about automated homes, intelligent appliances, and domestic technology designed to reduce the time and labor required to maintain a household.

One of the most striking examples of this vision appeared in 1959 at the American National Exhibition in Moscow. The event, held during the height of the Cold War, served as a technological and cultural showcase for the United States. American companies were invited to demonstrate innovations that reflected the country’s industrial capabilities and its vision of modern living.

Among the displays that captured public attention was Whirlpool’s “Miracle Kitchen of the Future.” The exhibit presented an idealized kitchen filled with advanced appliances intended to simplify cooking, cleaning, and household management. Inside that futuristic environment appeared a machine that looked as though it had arrived decades ahead of its time: a concept vacuum cleaner designed to move across the floor automatically.

The device sat low to the floor with a sleek, wedge-shaped body that resembled a small mechanical vehicle rather than a traditional appliance. A circular intake positioned near the top of the machine served as the vacuum inlet, while the underside housed the mechanical components responsible for suction and motion. Unlike conventional vacuum cleaners of the era—which required a person to push a bulky upright unit across the floor—the machine was presented as a self-moving cleaning device.

The concept behind the machine was simple yet forward-looking. Instead of a person performing the repetitive task of vacuuming, the appliance would move across the floor on its own, collecting dust and debris. In demonstrations and promotional material, the device represented the idea that household chores might eventually be handled automatically by machines.

This idea represented a significant shift from how domestic appliances had traditionally functioned. Most household machines of the early twentieth century still required direct human control. Washing machines, vacuum cleaners, and kitchen appliances were designed to reduce labor, but they remained tools operated manually. The concept suggested a different future—one in which machines might eventually handle routine tasks with minimal human involvement.

Technologically, the concept was far ahead of what the electronics of the 1950s could realistically support. The sensors, processors, and navigation systems required for truly autonomous household machines did not yet exist. As a result, the device was best understood as a conceptual prototype, illustrating an idea about the future of domestic automation rather than a practical consumer product ready for mass adoption.

Yet the demonstration carried an important symbolic message. Engineers and designers were already exploring the possibility that machines could take on everyday household responsibilities long before the computing technologies required to make that vision practical had been developed.

The concept also reflected the broader optimism of the era. During the 1950s, the idea of the “automated home” became a recurring theme in industrial design and consumer exhibitions. Companies imagined kitchens equipped with programmed ovens, automated dishwashers, and appliances capable of preparing meals or performing household tasks with minimal supervision. Domestic work, which had historically required hours of daily effort, was increasingly seen as something technology might eventually simplify.

Seen in this context, the device was not an isolated curiosity. It was part of a larger cultural and technological movement that imagined a future where homes would be filled with intelligent machines quietly performing everyday work.

The idea would take decades to become practical.

By the early twenty-first century, advances in computing, sensor technology, and battery design finally made autonomous household machines possible. Modern robotic vacuum cleaners now navigate homes using infrared sensors, cameras, mapping systems, and artificial intelligence algorithms that allow them to avoid obstacles, learn room layouts, and return automatically to charging stations.

The core concept demonstrated in 1959—the idea that a machine might move across a room cleaning the floor without human guidance—has since become an ordinary part of daily life in many homes.

Looking back, the concept stands as an early example of how engineers often imagine future technologies long before the infrastructure exists to support them. Decades before microprocessors and smart sensors made autonomous cleaning machines possible, designers were already presenting the idea of automated household maintenance.

The photograph from the 1959 exhibition captures that moment of anticipation. A small experimental machine rests on the kitchen floor while observers look on with curiosity. At the time, it represented a bold glimpse of what the home of the future might look like. Today, it appears less like science fiction and more like one of the earliest ancestors of the robotic machines that now quietly move through homes around the world performing the same task its designers imagined more than sixty years ago.

Self-propelled vacuum concept demonstrated as part of Whirlpool’s “Miracle Kitchen of the Future” exhibit at the American National Exhibition in Moscow, illustrating an early idea for automated household cleaning decades before modern robotic vacuums.

Archival photograph — original photographer unknown.

Television Before the Modern Screen

Television, like many technologies that now feel ordinary, did not emerge fully formed. The sleek flat-panel displays mounted on walls today are the result of decades of experimentation, design risks, and technological prototypes that attempted to reshape how television could exist inside the home.

During the early years of television, the devices themselves were bulky, heavy, and mechanically complex. Most sets used cathode ray tube (CRT) technology, which required deep cabinets to house the vacuum tubes and electronic components necessary to generate the image. Televisions of the 1940s and early 1950s often resembled pieces of furniture more than electronic devices. They were built into wooden cabinets designed to blend with living room décor, and their screens were relatively small compared to the size of the cabinet surrounding them.

Yet engineers and designers quickly began asking an important question: did televisions have to look like that?

One of the most striking attempts to rethink the television’s design arrived in the late 1950s with the introduction of the Philco Predicta television. Released by the Philco Corporation in 1958, the Predicta stood out immediately because of its unusual appearance. Instead of embedding the screen inside a large cabinet, the design separated the display from the base unit.

The screen appeared to sit atop the set on a slender support, creating the illusion that the display was floating above the electronics beneath it. The base housed the internal components, while the display portion presented the viewing image in a much more visually distinct way than traditional televisions.

The Predicta’s futuristic look captured public attention. Its styling reflected the broader cultural fascination with space-age design that defined the late 1950s, a time when jet aircraft, rockets, and atomic-age optimism influenced everything from automobiles to household appliances.

Technologically, however, the Predicta faced limitations. The electronics used to power the display were still based on vacuum tube technology, which generated significant heat and required frequent maintenance. Early models were known to experience reliability problems due to the stresses placed on their components.

Despite those technical challenges, the Predicta demonstrated an important shift in thinking: televisions could be designed as modern technology objects, not just pieces of furniture.

A few years later, designers and engineers began exploring an even more ambitious idea.

In 1961, a concept television was presented at the Home Furnishings Market in Chicago that suggested what the future of television might look like. The prototype display was remarkably thin for its time—only about four inches thick—an astonishing design considering that most CRT televisions required far deeper cabinets to house their components.

But the thin display was only part of the concept.

The prototype also included an automatic timing system capable of recording television programs for later playback. This idea introduced a radical shift in how television could be consumed. For decades, viewers had been tied to the broadcast schedule. If a program aired at a particular time, the only way to watch it was to be present when it was transmitted.

The 1961 concept suggested something different: television viewing could become flexible and user-controlled.

The built-in timing system would allow viewers to record programs automatically and watch them later at their convenience. In essence, the prototype anticipated a behavior that would not become widespread until decades later.

This concept laid the groundwork for time-shifted media consumption.

In the 1970s and 1980s, home videotape systems such as VHS recorders finally made program recording possible for consumers. By the late 1990s and early 2000s, digital video recorders (DVRs) allowed viewers to store and replay television programs with far greater convenience. Today, streaming platforms and on-demand media services have taken the concept even further, allowing viewers to watch vast libraries of content at any time they choose.

The modern streaming era—where entire seasons of television can be watched whenever convenient—reflects the same core idea that engineers were already exploring in the early 1960s.

Looking back, both the Philco Predicta and the 1961 thin-screen prototype reveal an important pattern in technological history. Designers often imagine future behaviors long before the technology required to support them fully exists. Early prototypes capture the vision, even if the underlying electronics still need decades of improvement.

Flat-screen televisions, digital recording systems, and on-demand viewing may feel like modern inventions, but the concepts behind them were already taking shape long before the modern television industry emerged.

In many ways, the future of television had already been imagined—it simply took time for the technology to catch up.

Philco Predicta television set featuring a futuristic swivel-mounted screen design, one of the most recognizable consumer electronics designs of the late 1950s.

Archival photograph — original photographer unknown.

The Rail Zeppelin: Radical Transportation Engineering

Throughout the history of transportation, some of the most important breakthroughs have come from experiments that initially seemed unconventional or even impractical. Engineers pushing the limits of speed and efficiency have often borrowed ideas from other industries, combining technologies in ways that challenge the assumptions of their time. One of the most striking examples of this kind of experimentation appeared in Germany in the early 1930s with a machine that looked less like a train and more like an aircraft running along railroad tracks.

This experimental vehicle became known as the Rail Zeppelin.

Developed by German engineer Franz Kruckenberg, the Rail Zeppelin was introduced in 1931 as part of an effort to explore new approaches to high-speed rail transportation. At the time, conventional trains relied on powerful steam locomotives that pulled multiple railcars behind them. These engines were effective for hauling freight and passengers, but they were not optimized for extreme speed. Their heavy mechanical systems and aerodynamic inefficiencies limited how fast they could safely travel.

Kruckenberg believed that rail transport could move much faster if engineers approached the problem from a different angle.

Instead of relying on traditional locomotive technology, he designed a vehicle shaped like an elongated aircraft fuselage. The body of the Rail Zeppelin was lightweight, streamlined, and built to reduce air resistance as much as possible. Its smooth cylindrical structure narrowed toward the rear, giving it an appearance similar to the rigid airships—often called Zeppelins—that were widely known during that era.

The most striking feature of the vehicle, however, was its propulsion system.

Rather than using wheels powered by internal mechanical drives, the Rail Zeppelin was propelled by a large aircraft-style propeller mounted at the rear of the train. The propeller was connected to a powerful gasoline engine that pushed the vehicle forward by generating thrust, much like an airplane propeller pushing an aircraft through the air.

This design eliminated the heavy mechanical systems typically used to transfer power from locomotive engines to train wheels. With less weight and fewer moving parts, Kruckenberg hoped the vehicle could achieve speeds that traditional trains could not match.

During testing on German railway lines, the Rail Zeppelin demonstrated exactly that potential.

In 1931, the experimental train reached speeds of approximately 230 kilometers per hour (about 143 miles per hour) during test runs. At the time, this was an extraordinary achievement for rail transportation. The speed record it set remained one of the fastest rail performances for decades, particularly for a vehicle powered by an internal combustion engine.

The streamlined body played a crucial role in achieving those speeds. Engineers began to understand that as trains moved faster, aerodynamic drag became one of the largest forces slowing them down. The Rail Zeppelin’s aircraft-inspired design helped reduce air resistance, allowing the vehicle to move through the air more efficiently than traditional box-shaped train cars.

Despite its impressive speed, the Rail Zeppelin faced several serious limitations that prevented it from becoming a practical transportation system.

The exposed propeller at the rear posed significant safety risks, particularly in busy railway environments where passengers, workers, and infrastructure would be nearby. The design also made it difficult to attach additional railcars, meaning the vehicle could not easily carry large numbers of passengers or heavy cargo. Operational flexibility—essential for commercial rail networks—was therefore limited.

Because of these challenges, the Rail Zeppelin remained an experimental project rather than a mass-produced transportation system. By the mid-1930s, the prototype was dismantled, and the concept was abandoned in favor of more conventional rail technologies.

Yet the project left behind an important legacy.

While the propeller-driven propulsion system proved impractical, the Rail Zeppelin demonstrated the enormous impact that aerodynamic engineering could have on high-speed rail travel. The lessons learned from its streamlined design helped engineers better understand how air resistance affects trains moving at high velocity.

Those insights would later influence the development of modern high-speed rail systems.

Today’s high-speed trains—including Europe’s TGV, Germany’s ICE, and Japan’s Shinkansen—are carefully shaped to minimize aerodynamic drag. Their sleek, tapered noses and smooth surfaces allow them to travel at speeds exceeding 300 kilometers per hour while maintaining stability and efficiency.

In many ways, the Rail Zeppelin represented an early attempt to rethink rail travel through the lens of aviation engineering. Even though the design itself did not become the future of transportation, the principles it explored helped pave the way for the high-speed rail networks that now connect cities across the world.

The strange propeller-driven train of 1931 may have looked like a curiosity of its time, but it played an important role in demonstrating that rail transportation could move far faster than anyone had previously imagined.

The experimental Rail Zeppelin high-speed propeller-driven railcar seen beside a conventional steam train at a railway platform in Berlin, Germany in 1931.

Archival photograph — original photographer unknown.

The Sea Shadow: Testing Stealth at Sea

Military research has often served as one of the most powerful engines of technological innovation. When nations compete for strategic advantage, they invest heavily in experimental technologies designed to provide even small operational advantages on the battlefield. During the late twentieth century, one of the most important areas of defense research involved the development of stealth technology—methods designed to reduce the ability of radar systems to detect vehicles, aircraft, and ships.

Stealth technology is most commonly associated with aircraft such as the F-117 Nighthawk and later stealth bombers, but similar ideas were also explored in naval engineering. One of the most unusual experiments in this field emerged during the 1980s with the development of an experimental vessel known as the Sea Shadow.

The Sea Shadow was built as part of a secretive research program conducted by the United States Navy, with major design and engineering support from Lockheed. The purpose of the project was not to produce an operational warship but to test whether stealth design principles—many of which had already been explored in aircraft—could also be applied effectively to ships operating on the ocean’s surface.

Traditional naval vessels are relatively easy to detect on radar. Their large hulls, vertical superstructures, and flat surfaces tend to reflect radar waves directly back toward the transmitting radar system. These strong reflections create distinctive radar signatures that allow ships to be identified and tracked from long distances.

Engineers developing stealth technology sought to disrupt that process.

Instead of reflecting radar signals back toward their source, stealth designs attempt to scatter those signals in different directions, reducing the strength of the return signal detected by radar receivers. This effect is typically achieved through carefully angled surfaces and geometric designs that redirect electromagnetic waves away from the radar transmitter.

The Sea Shadow became a floating laboratory for testing exactly these ideas.

Unlike conventional naval vessels, the ship featured an unusual faceted hull design composed of sharply angled surfaces. These surfaces were arranged so that radar waves striking the vessel would scatter in multiple directions rather than reflecting directly back toward the radar system attempting to detect it. The overall profile of the ship was also kept low and narrow, reducing the number of vertical surfaces that could produce strong radar reflections.

The vessel’s appearance was unlike that of any traditional ship. Its angular geometry and dark exterior gave it a silhouette that many observers compared to a floating stealth aircraft rather than a naval vessel.

Another unusual feature of the Sea Shadow was its Small Waterplane Area Twin Hull (SWATH) configuration. In this design, the primary buoyant structures of the ship are located below the waterline, connected to the upper platform by narrow support struts. This configuration reduces the area of the hull that interacts with surface waves, improving stability in rough seas while also minimizing the visible structure above the waterline.

Construction of the Sea Shadow took place under strict secrecy during the early 1980s. Much of its testing occurred inside a large floating dry dock known as the Hughes Mining Barge, which allowed the vessel to remain hidden from public view. For years, the ship’s existence was largely unknown outside military and defense research communities.

When images of the Sea Shadow eventually became public, the vessel’s unconventional design captured widespread attention.

Despite its futuristic appearance, the ship was never intended to serve as a combat vessel. Instead, it functioned purely as a research platform, allowing engineers to study how stealth-oriented shapes behaved in real maritime conditions. Researchers examined how radar signals interacted with the vessel’s surfaces, how ocean environments affected stealth performance, and how similar design principles might be incorporated into future naval vessels.

The results of these experiments proved valuable.

Although the Sea Shadow itself was eventually retired and never entered operational service, the research conducted during the program helped expand the understanding of radar signature reduction in naval engineering. Designers began incorporating angled surfaces, enclosed equipment, and reduced superstructure complexity into later warship designs in order to lower their radar visibility.

Many modern naval vessels now incorporate some level of radar signature management. While they are not completely invisible to radar, their shapes and structural features are often designed to reduce detection range and delay enemy targeting.

The Sea Shadow represented one of the earliest real-world demonstrations that stealth technology could extend beyond aircraft and into maritime environments.

More broadly, the project illustrated how technological ideas often migrate between different fields of engineering. Concepts originally developed for stealth aircraft—such as radar-deflecting geometry and electromagnetic signature management—proved adaptable to ships operating on the open ocean.

Though it remained an experimental craft rather than a deployed warship, the Sea Shadow played an important role in expanding the possibilities of naval design. The lessons learned from its unusual structure helped shape how modern naval vessels approach radar visibility in an era where electronic sensors increasingly dominate the battlefield.

The Sea Shadow, an experimental stealth vessel developed by the U.S. Navy during the 1980s to test radar-reducing naval technologies.

Archival photograph — original photographer unknown.

Early Experiments in Wearable Displays

Long before virtual reality headsets, augmented reality glasses, and immersive digital environments became widely known, engineers were already experimenting with the idea of placing visual displays directly in front of a user’s eyes. The concept of wearable displays may appear modern, but its origins reach back decades to a period when television itself was still a relatively young technology.

During the 1950s and 1960s, television had rapidly become a dominant form of entertainment in homes across the United States and Europe. Families gathered around living room screens to watch news broadcasts, sports events, and emerging television programming. As television technology matured, inventors began exploring new ways of delivering that viewing experience beyond the traditional stationary screen.

Among the more unusual ideas to emerge from this experimentation were television viewing glasses, a form of wearable display technology designed to allow individuals to watch television through a headset rather than on a conventional screen.

These early devices placed small electronic display elements directly in front of the wearer’s eyes. By positioning miniature screens within a headset frame, the device created the illusion of a larger image appearing in the user’s field of vision. The goal was to simulate the experience of watching a full-sized television while allowing the viewer to remain mobile or watch content privately.

Although the technology was primitive by modern standards, the concept itself was strikingly forward-looking.

In an era when televisions were still bulky appliances occupying large spaces in living rooms, the idea that a person could watch programming through a wearable device suggested an entirely different future for visual media. Instead of gathering around a shared screen, viewers could experience entertainment individually through personal display systems.

The technology behind these early wearable displays was limited by the electronics available at the time. Miniature screens were difficult to manufacture, and image resolution was often low compared to traditional televisions. Power requirements and component size also created practical challenges for wearable designs.

Even so, the prototypes demonstrated several concepts that would later become central to modern immersive technology.

By placing displays directly in front of the eyes, the systems created a controlled visual environment where external distractions could be minimized. This approach allowed engineers to experiment with how digital images might appear within a user’s field of vision, opening the door to new possibilities for human-computer interaction.

Over the following decades, researchers expanded on these ideas in a variety of ways. In the late 1960s and 1970s, scientists began exploring head-mounted display systems capable of projecting computer-generated imagery. Some early experimental devices were even designed to overlay digital graphics onto real-world views, a concept that would later evolve into augmented reality.

By the late twentieth century, advances in computing power, miniaturized electronics, and display technology made more sophisticated wearable displays possible. Engineers developed head-mounted displays for military flight systems, simulation training, and research laboratories. These systems allowed pilots and trainees to view critical information directly within their line of sight.

Today, the same fundamental principles appear in virtual reality (VR) and augmented reality (AR) technologies used around the world.

Modern VR headsets create fully immersive digital environments where users can explore computer-generated worlds, interact with virtual objects, and experience simulations designed for entertainment, education, and professional training. Augmented reality glasses, meanwhile, overlay digital information onto the real world, blending physical and digital environments in ways that were once the subject of science fiction.

Looking back at the television viewing glasses developed during the 1960s, it becomes clear that the core idea behind these technologies has been evolving for decades. Engineers were already experimenting with ways to bring digital images closer to the human eye long before modern processors and graphics systems made immersive experiences practical.

What once appeared to be a curious novelty now stands as an early step in the development of wearable computing.

Those simple television glasses hinted at a future in which screens would no longer be confined to walls or desks. Instead, displays would become personal, portable, and immersive—reshaping how humans interact with information, entertainment, and the digital world itself.

Experimental wearable television viewing glasses developed during the 1960s as an early attempt at personal display technology.

Archival photograph — original photographer unknown.

The Camera That Mapped the War — Aerial Reconnaissance and the Kodak K-24

Before satellites surveyed the battlefield from orbit and long-endurance drones streamed real-time video across encrypted networks, the map of war was drawn through photography carried into the sky. Military planners relied on images captured by reconnaissance aircraft to understand enemy territory, troop movements, and industrial production. Among the most important tools used in that effort during the Second World War was the Kodak K-24 aerial reconnaissance camera, a rugged photographic system designed specifically for high-altitude intelligence gathering.

The K-24 was engineered for one purpose: to capture clear, reliable images from aircraft operating thousands of feet above hostile territory. Mounted within specialized reconnaissance planes and modified bombers, the camera recorded detailed photographs of enemy infrastructure that could not be observed from the ground. Every mission had the potential to reveal information capable of altering the course of military planning.

The photographs produced by the K-24 were not artistic compositions. They were instruments of strategy.

Reconnaissance crews used the camera to photograph rail networks, supply depots, shipyards, bridges, airfields, factories, submarine pens, artillery positions, and troop concentrations. The images captured by these cameras allowed analysts to identify new construction, track logistical routes, and determine whether bombing missions had successfully damaged key targets.

In many cases, a single photographic run could expose vulnerabilities in enemy defenses that had previously gone unnoticed.

Technically, the K-24 was a powerful piece of equipment for its time. The camera used large-format film, which allowed it to capture extremely high levels of detail even when operating at significant altitude. Combined with precision lenses and a durable mechanical shutter system, the camera could record sharp images while the aircraft carrying it moved at high speed.

Photographic clarity was critical. Intelligence analysts needed to examine the resulting images for minute details such as railcar positions, aircraft parked on runways, or the construction progress of defensive fortifications. Even subtle changes between photographs taken days or weeks apart could indicate major shifts in military activity.

Once reconnaissance aircraft returned from their missions, the film they carried became the raw material for intelligence analysis.

Teams of specialists processed the film and studied the photographs using magnifying devices and stereoscopic viewers, tools that allowed analysts to examine pairs of overlapping images in three dimensions. This stereoscopic technique made it possible to measure building heights, identify terrain features, and understand how structures were arranged across large areas of land.

Through careful analysis, these photographs were transformed into detailed maps of enemy infrastructure.

Entire operations depended on this information. Strategic bombing campaigns required precise targeting data that only aerial photography could provide. Naval operations used reconnaissance images to track ship movements and identify harbor defenses. Ground forces relied on photographic intelligence to understand terrain conditions before launching major advances.

One of the most significant examples of aerial reconnaissance shaping military strategy occurred during the planning of the D-Day landings in Normandy in 1944. Allied planners studied extensive aerial photography of the French coastline to identify beach obstacles, defensive bunkers, artillery placements, and transportation routes behind the German defensive lines.

Without the intelligence gathered by reconnaissance cameras like the K-24, planning such an operation would have been far more dangerous and uncertain.

The work required to obtain these photographs was itself extremely risky.

Reconnaissance aircraft often operated deep inside enemy territory, flying long missions over heavily defended regions. Unlike bombers, many reconnaissance aircraft carried minimal defensive armament in order to remain lighter and faster. Their survival depended on altitude, speed, and careful flight planning rather than firepower.

If these aircraft were intercepted by enemy fighters or targeted by anti-aircraft defenses, their chances of escape could be slim. Yet crews continued flying these missions because the intelligence they gathered was considered indispensable.

Every roll of exposed film carried back to base had the potential to change how commanders understood the battlefield.

Today, aerial reconnaissance has evolved dramatically. Modern intelligence systems rely on satellites capable of imaging the Earth from orbit, high-altitude surveillance drones, and sensor platforms that can detect heat signatures, radar emissions, and electronic signals. Advanced imaging technologies can now capture data across multiple wavelengths of light, revealing details that traditional photography could never detect.

Despite these advances, the foundation of modern reconnaissance was built during an earlier era when intelligence depended on mechanical cameras, chemical film, and human courage.

Devices like the Kodak K-24 represent that foundation. Long before digital sensors and orbital surveillance networks existed, wars were mapped through precision optics carried into hostile skies. The images those cameras captured allowed commanders to see beyond the front lines and understand the shape of the battlefield itself.

In that sense, the K-24 was more than a camera. It was one of the earliest instruments that allowed nations to observe war from above—and to transform images into strategy.

Kodak K-24 aerial reconnaissance camera used by American forces during World War II to capture high-altitude intelligence photographs of enemy positions.

Archival photograph — original photographer unknown.

The Future That Almost Arrived Early — The 1961 Thin Television Recording Concept

In June of 1961, visitors attending the Home Furnishings Market in Chicago encountered a technological concept that seemed almost impossible for its time. Among the displays presented to the public was an experimental television design only four inches thick, paired with a built-in automatic timing system capable of recording television broadcasts for later playback.

To modern audiences, surrounded by flat-panel displays and digital media platforms, the idea might appear unremarkable. In the early 1960s, however, it represented a vision that stood far outside the everyday reality of consumer electronics.

At the time, televisions were still dominated by cathode-ray tube (CRT) technology, which required large vacuum tubes and bulky supporting electronics. These sets were typically enclosed in heavy wooden cabinets and occupied a prominent place in living rooms. Many televisions looked more like pieces of furniture than electronic devices, blending into the décor of a household rather than standing out as a technological centerpiece.

Because of the CRT’s physical requirements, televisions were thick, heavy, and difficult to redesign in radically different forms.

Against that backdrop, the prototype shown in Chicago appeared strikingly futuristic.

The display was remarkably thin compared with the televisions people were accustomed to seeing. While the internal technology still relied on experimental engineering approaches rather than the flat-panel displays used today, the design suggested a future where televisions might no longer dominate a room with their physical bulk.

Instead, they could become sleek electronic displays integrated naturally into a home’s architecture.

Equally forward-thinking was the system demonstrated alongside the display: an automatic timing device designed to record television programs without requiring someone to be present when the broadcast occurred.

This concept directly challenged the traditional structure of television broadcasting.

For decades, viewers had been required to watch programs precisely when they were transmitted. Television schedules controlled the relationship between broadcasters and audiences. If a show aired at a particular time, the only way to see it was to be in front of the television at that exact moment.

The timing system introduced in 1961 suggested a different model.

By presetting the device in advance, viewers could instruct the television to capture a broadcast automatically. The program would be stored for playback later, allowing the household to watch it at a more convenient time.

In essence, the system anticipated the behavior that would later become known as time-shifted viewing.

The concept foreshadowed several technologies that would eventually reshape how people consume media. In the 1970s and 1980s, the arrival of video cassette recorders (VCRs) allowed households to record television programs onto magnetic tapes. By the late 1990s and early 2000s, digital video recorders (DVRs) made the process even easier, allowing viewers to schedule recordings automatically and store large numbers of programs for later playback.

In the twenty-first century, streaming services would extend this idea even further, making entire libraries of content available on demand.

The 1961 prototype, however, appeared decades before those technologies reached the consumer market.

Thin television displays did not become widespread until the late twentieth and early twenty-first centuries with the development of plasma screens, liquid crystal displays (LCDs), and later OLED panels. These technologies eliminated the deep cabinet designs required by CRT televisions, allowing displays to become dramatically thinner and lighter.

Similarly, practical home recording systems required advances in magnetic storage, electronic control systems, and affordable manufacturing techniques that were still years away from maturity in the early 1960s.

What makes the Chicago demonstration so fascinating is not that it immediately changed the television industry. It did not. Consumers would continue using bulky CRT televisions for decades afterward.

What the demonstration revealed instead was something deeper about the nature of technological innovation.

Engineers and designers often imagine the next stage of technology long before the materials, electronics, or manufacturing capabilities exist to fully support it. Prototypes and conceptual demonstrations serve as glimpses of possibilities that may take years—or even generations—to become practical.

The thin television and automatic recording concept presented in 1961 represented exactly that kind of glimpse.

It showed a future where screens could be compact and elegant, where viewers could record programs automatically, and where control over media consumption could shift from broadcasters to audiences.

Many innovations begin this way—not as finished products ready for immediate adoption, but as visions of what technology might eventually become.

In Chicago during the summer of 1961, visitors witnessed one of those visions. The world would not fully catch up with it for another several decades.

Experimental thin television display shown at the Chicago Home Furnishings Market in 1961 featuring an automatic timing device designed to record television programs for later viewing.

Archival photograph — original photographer unknown.

The Colossus of the Battlefield — The Captured Italian Heavy Artillery of Caporetto

In the autumn of 1917, the Italian Front of the First World War became the site of one of the most dramatic military collapses of the conflict. The Battle of Caporetto, launched by combined German and Austro-Hungarian forces, broke through Italian defensive lines with devastating speed. Using coordinated artillery barrages, infiltration tactics, and chemical weapons, the Central Powers pushed Italian forces into a chaotic retreat that stretched for miles across the mountainous terrain of northeastern Italy.

During the advance, large quantities of Italian equipment were abandoned or captured. Among the most striking of these trophies were enormous heavy artillery pieces—machines designed to deliver devastating firepower against fortified positions and entrenched defensive systems.

One such weapon appears in the photograph: a massive Italian heavy artillery piece mounted on an oversized transport carriage, captured by Austro-Hungarian troops during the Caporetto breakthrough. Even in still imagery, the machine appears imposing. Its massive barrel, reinforced structural supports, and enormous traction wheels reveal a weapon engineered for destructive power rather than mobility.

To soldiers standing beside it, the gun must have seemed almost monumental.

Heavy artillery like this represented the industrial transformation of warfare that defined the First World War. In earlier conflicts, battlefield tactics had often revolved around maneuver, cavalry movements, and infantry formations advancing across open terrain. By the early twentieth century, however, advances in metallurgy, explosives, and mechanical engineering had reshaped the battlefield.

The First World War introduced a new reality: artillery had become the dominant force in combat.

Massive guns capable of launching high-explosive shells across long distances could obliterate trenches, destroy fortified bunkers, and devastate troop concentrations before infantry ever advanced. Entire sections of the front were reshaped by sustained bombardments that lasted hours, days, or even weeks.

Weapons like the captured Italian heavy artillery piece were designed specifically for that kind of warfare.

These guns fired shells weighing dozens or even hundreds of pounds, capable of demolishing reinforced structures or collapsing trench networks. Their sheer size allowed them to generate enormous explosive force, making them critical tools for breaching defensive lines that had been fortified with concrete, steel, and barbed wire.

Yet the power of such artillery came with significant logistical challenges.